When all the air gets sucked out of the room

The last five years have done a lot, in terms of "AI" and its impact on the world. The things I love on the internet are being hollowed out by the effects of scrapers and hyperscale data centres. Education is struggling with a race to the bottom, academic publishing is in the same boat. Now what?

Five years ago, before I joined the team writing an application for a large grant on the ethical, legal, and societal aspects of AI in public safety, I was already a grump about AI. Because I am a hipster like that. I was left oddly cold by perfectly good student projects involving things like training a generative adversarial network (GAN) on photos of CEOs in order to demonstrate the reproduction of bias. Really nice work on predictive text and Markov chains did not move me, beyond appreciation of the competence involved. In short, I was not keen on perfectly legitimate and interesting uses of the kinds of “AI” that were in circulation a few years back, and found the whole field a little tiresome already. That’s five years ago, back in 2021 – before ChatGPT swept onto the scene in 2022 and triggered a cascade of imitators. That was back when the most-known product from OpenAI, DALL-E, wasn’t very good at making images yet, and neither Midjourney nor Stable Diffusion had come onto the scene. Before the entire global economy was apparently being propped up by the same few companies passing the same few billions or trillions of simultaneously meaningless and profoundly impactful dollars back and forth amongst themselves. It was, in short, before the absolutely obscene boom in the “AI” industry that we’ve experienced in this short half a decade. (Or really, not half a decade, but less than four years, if we view the late-2022 release of ChatGPT as the event that opened the floodgates.)

What have we lost?

In the five years since I was irritable in 2021, everyone has become an expert on whatever the hell “AI” is. In the grant application I was helping with at the time, though the funding instrument was geared towards supporting research on the potential impacts of AI, we took great pains to point out the definitional difficulties of the term. As I am constantly grumbling, "AI" is not one thing, it is an umbrella term for a collection of different technologies and techniques, with an ostensibly shared but fairly diffuse goal. No such care is now being taken in marketing a subset of technologies/products which will apparently simultaneously take your job and save the world.

I now miss the more innocent days when I was able to be lightly irritable about cool artworks involving self-trained (or at least self-refined) computer vision and image generation models. I long for the days when clever and committed people were spending time troubleshooting software running on their own computers, trying to get it to do novel or at least meaningful things. Those days, to me, were far more exciting than the ones we live in now: the things I love on the internet are being hollowed out by the effects of scrapers and hyperscale data centres. Archive.org and the Wikimedia Foundation are struggling to buy the hard drives needed to do their work. Running a website is now one big distributed denial of service (DDOS) attack, not out of malice but out of the avarice of companies training models on any publicly-available data they can get. Education is struggling with a race to the bottom, with students and their teachers making adversarial use against each other of text generation and “AI recognition” tools. Academic publishing is in the same boat, with people on both sides of the writing and review process using large language models (LLMs) in non-transparent ways, leading to a loss of trust and a colossal waste of time.

Race to the bottom

Most of these problems are not just about tool use, but about economics. It's not the sheer existence of LLMs that is causing this situation, but the headlong rush to get rich off of them. The problem in academic publishing, and the plight of education are also issues of economics. In the case of publishing, if journal articles and conference proceedings are the metrics on which your success is judged, you may become desperate enough at some point to take a shortcut and skip doing some of the writing yourself to increase your productivity. In a time when the volume of submissions for review is already high (because of those performance metrics), it doesn’t take a lot of desperate people using text-generating tools to make the review load untenable. Reviewers, peers in the field donating their time to journals out of a desire to contribute to scholarship, and out of a tacit understanding that reviewing is one of the things you do, find themselves with more and more of this volunteer labour, without any assurance that they’re spending time reviewing real work by other scholars. So why not get ChatGPT to synthesize it for you so that you can write that review a little faster, with a little less investment? It’s a race to the bottom.

And we are working so hard to normalize that race to the bottom. I can’t read or listen to news without hearing pundits, talking heads, experts, or journalists themselves normalizing the idea that this is just the world we’re living in now. Of course job seekers are writing cover letters with the assistance of the LLM of their choice – why wouldn’t they if employers are using “AI” tools in their screening processes? The utter hopelessness and lack of agency in this viewpoint blows my mind. Why should this be an “of course” scenario? Why in the world are we so readily willing to accept that, in this case, job seekers and job providers are going to work adversarially against each other in order to lighten their load? The move is then justified (in the particular panel I happened to be listening to on the topic – other mediated opinions on the subject are available) by the sheer competitiveness of the current job market. Of course companies need to automate their hiring processes, too many people are applying for the positions they list. Of course job seekers need to use LLMs to help them game their applications (or worse: use agentic tools to totally automate the application process), hundreds of people are applying for each position. It becomes so painfully convenient to rationalize the current state of affairs, and to completely naturalize the absurd arms race between, in this case, those hiring and those wishing to be hired. But the same story comes back around in all kinds of situations and fields.

A discourse air pump

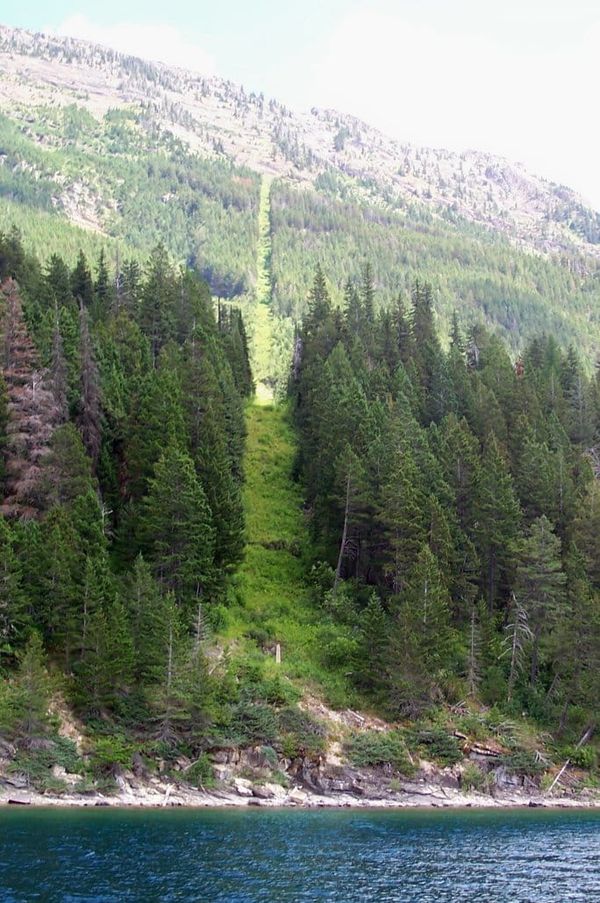

In the title of this piece, I refer to all the air getting sucked out of the room. Indeed, the accompanying image is a painting of an experiment with an air pump, sucking all the air out of a small chamber and killing a bird. You're probably already ahead of me, but the description of the painting should clinch it. I am referring, as you may have guessed already, to the way the current “AI” boom is sucking the air out of the room of public life, hollowing out the collective belief in alternatives, and damaging the capacity for discourse. The mass mania for a future in which “AI” takes over more and more tasks obscures other problems, possibilities, and discussions that could and should be happening now.

I’ve written previously (or if we’re being brutally honest, griped previously) about the way the flattening of language we use to discuss “AI,” and the various technologies it encompasses in different moments and for different purposes, limits the utility of the conversations we can have about specific technologies and their applications. Today, I’m here with a corollary. The society-scale obsession with this “AI” thing, and with the individuals and companies aiming to get rich or powerful (or whatever it is they’re after) off of its adoption, is sucking all the air out of the room. What conversations could we be having if we weren’t having the ones about what “AI” is doing to education, the job market, policing, labour rights, relations between people, and so on? What utterances would we be spending that air on if we weren’t caught in the throes of this obsession with obsequious probability machines? I don’t know, but I really wish I could find out.

Maybe I have it worse because I study the role of these technologies in society. Maybe reading the tech press gives me a skewed view. But I don’t think so. Arguably, my media consumption gives me access to some of the more nuanced narratives, and also some of the more critical ones. Often, in those specialized outlets, actual specific technologies are discussed, not just that old hype acronym, “AI.” In counterpoint, I am also an avid listener of the BBC World Service, arguably one of the most everyone-oriented news sources out there. In the last four days, I have heard not just about the Musk v. Altman trial, but also about, yes, the impact of “AI” on jobseekers. Discussing the current and potential impacts of “AI” on society is happening all over the place, often without the nuance that would make it more productive. Worse, it is far too easy to stumble across examples of the absolute conviction that this is what our future is, and we just need to figure out how to adapt.

Now, I normally have a point, a silver lining, or some kind of call to action. At least a take-away that provides a pointer in a hopeful direction. After all, if we have no hope that better things can happen, we have no motivation for resistance, or for building the alternatives. So what is the hope I want to point towards at this moment? All my usual calls to action feel a little trite in the face of a large-scale collapse of discourse. The best I can do with this one is point out the obvious: the boom is a boom. What look like inevitable developments are not, in fact, inevitable at all. The stories told to us by those invested in selling "AI" tools (both the positive ones about curing all illnesses, and the negative ones about a super-intelligent computational entity that wipes out humanity) are to their benefit. Having the only conversation be "AI" and its promises or risks gives yet more air to the people who want to make a comparatively quick buck off of the hollowing out of our society and ecology. We can and should be talking about other things (I know, here I am with another couple thousand words on the topic, also making the problem worse), and continuing to stretch our imaginations beyond the hype is important.

Literacy is not simply the capacity to use something. If we are being told that we must become users of "AI" tools in order to survive in the world we live in, or will be living in a few years from now, we need to approach that with criticality. The recognition that things being presented as inevitable do not, in fact, need to happen, is also a literacy. It is critical media literacy. The onslaught we're facing isn't inherently new because it's about an apparently new technology. It's the same old game of aggressively manufacturing a market by telling us that we'll be left behind if we don't comply. As the activists say, resistance is fertile. We do not need to uncritically believe the hype. We do not need to do as we're told. And next week, I'll write about something other than "AI."